Stop Drowning in PDFs: How to Automate Research Synthesis with AI

Imagine this scenario: It’s 2:00 AM. You have 47 tabs open on your browser. Your desktop is a graveyard of files named final_paper_v2_REALLY_final.pdf. You have a literature review due in three days, and you’ve barely scratched the surface.

We’ve all been there. The sheer panic of the “information overload” phase is something every researcher, analyst, and PhD student knows intimately.

For decades, the unspoken rule of academic rigor was simple: Read everything. If you wanted to understand a field, you had to read every line of every paper linearly. But let’s be honest—in an era where millions of new papers are published annually, that “brute force” method isn’t a badge of honor anymore. It’s a bottleneck.

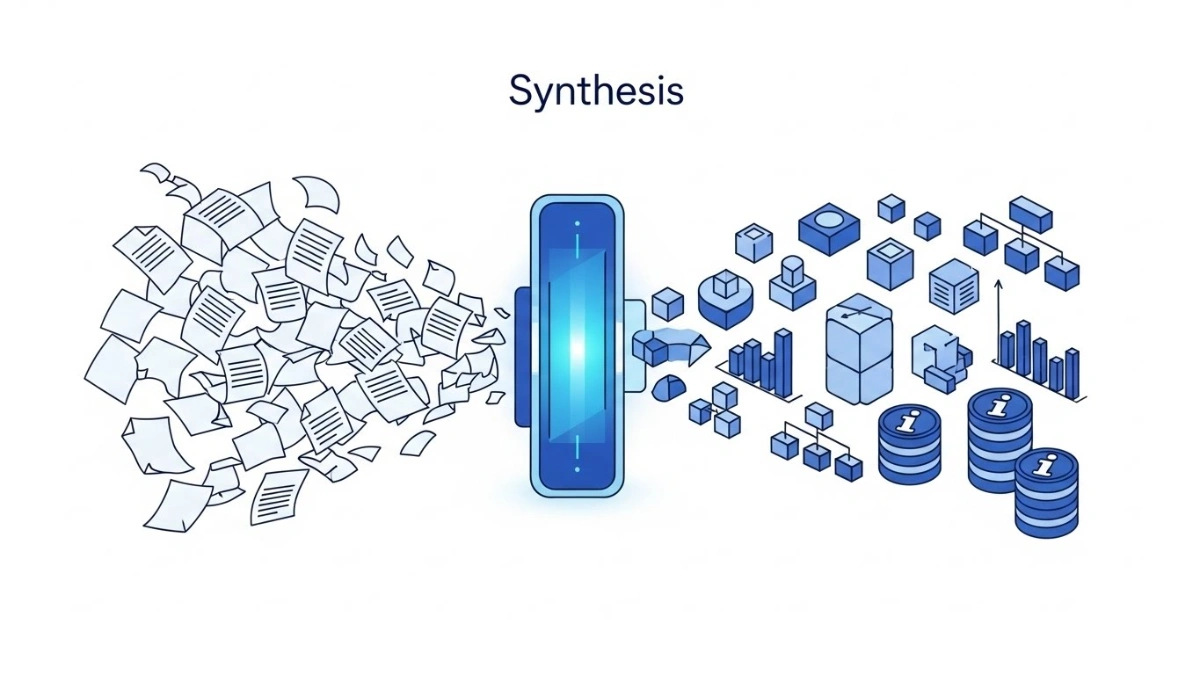

Here is the good news: You can stop reading 100 papers and start synthesizing them instead. By treating AI tools not as authors, but as tireless research assistants, you can automate the grunt work and reclaim your brain space for the actual thinking.

Here is your step-by-step workflow to turn that mountain of PDFs into actionable insights.

Key Takeaways

- Shift Your Mindset: Move from “consumption” (reading line-by-line) to “curation” (extracting specific data points).

- Preparation is Everything: AI works best with structured questions. Use a scoping framework like PICOS before you start.

- The “Matrix” Method: Don’t ask for summaries. Ask AI to extract data into a structured format (like JSON or tables).

- Human in the Loop: AI is your assistant, not your replacement. Always spot-check and verify citations to avoid hallucinations.

Phase 1: The Setup (Garbage In, Garbage Out)

Before you even open an AI tool, we need to talk about data hygiene. The biggest mistake I see researchers make is throwing a messy PDF at an AI and asking, “What does this say?”

That is a recipe for generic, useless fluff. To get gold, you need to refine the ore first.

1. Define Your Scope with Precision

An AI cannot read your mind. You need to build an Extraction Matrix—essentially a mental spreadsheet of exactly what you are looking for.

I recommend using the PICOS framework to define your columns:

- Population: Who are the subjects? (e.g., Remote workers)

- Intervention: What is being tested? (e.g., Asynchronous communication tools)

- Comparison: What is the control? (e.g., Real-time meetings)

- Outcome: What is the result? (e.g., Productivity scores)

- Study Design: (e.g., Longitudinal survey)

Action Step: Create a list of 5–8 specific questions based on these categories. Don’t ask “Summarize the paper.” Ask, “What was the specific sample size and what were the inclusion criteria?”

2. Prep Your Files

PDFs are designed for human eyes, not machine logic. Headers, footers, and floating images can confuse standard AI models.

- File Hygiene: Rename your files consistently (e.g.,

Author_Year_Topic.pdf). - Conversion: If possible, convert your PDFs to clean text (

.txt) or Markdown. This strips away the visual noise and leaves the pure data for the AI to process.

Phase 2: The Execution (Your AI Research Assistant)

Now that you have your data and your questions, it’s time to put the AI to work.

Choosing Your Tool

You don’t need to overcomplicate this.

- For Discovery: Use dedicated academic search engines (like Elicit or Consensus) to find the papers and get a bird’s-eye view.

- For Deep Extraction: Use high-context Large Language Models (LLMs) that can handle long documents.

- For Privacy: If you are dealing with sensitive proprietary data, look into local LLMs that run on your own machine so data never leaves your laptop.

💡 Pro Tip: The “Master Extraction” Prompt

This is where the magic happens. Do not chat with the AI; program it. Use a prompt structure that defines a Role, Context, Task, and Constraint.

The Blueprint:

- Role: You are a senior research scientist. You are skeptical, precise, and detail-oriented.

- Task: Extract the following data points from the text below.

- Format: Output the data in a strict JSON format (or a Markdown table).

- Constraint: Do not summarize the introduction. If information is missing, write “Not Specified.” Do not guess.

Why this works: By asking for a specific format (like JSON or a table), you force the AI to be structured. It stops “creative writing” and starts “data processing.”

Phase 3: The Human Touch (Verification)

Here is the uncomfortable truth: AI hallucinates. It might invent a sample size or misinterpret a P-value because it “looks” right.

This is why we implement the “Human in the Loop” Protocol.

The Spot-Check Rule

Never blindly copy-paste AI output into your thesis or report.

- Audit the First 5: When you start, manually read the first few papers and compare them to the AI’s extraction. Is it accurate? If not, tweak your prompt.

- Random Sampling: Even after you trust the prompt, randomly audit 10% of the papers.

- Fact-Check Claims: If the AI makes a bold claim (e.g., “This study proves X”), go back to the source text and verify.

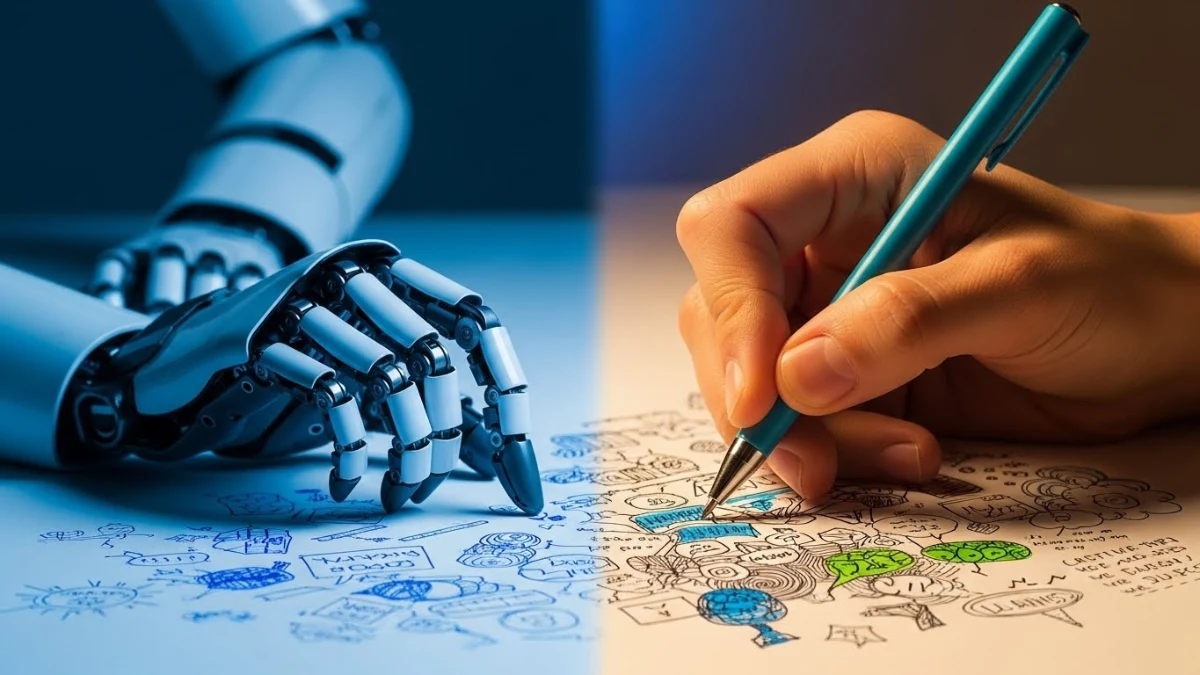

Remember, the AI is the Junior Research Assistant. You are the Principal Investigator. You sign off on the final quality.

Conclusion: Reclaiming Your Mental Energy

The future of research isn’t about reading faster; it’s about engineering a workflow that separates signal from noise.

By automating the extraction phase, you aren’t “cheating.” You are freeing up your mental energy for the high-level synthesis, the critical thinking, and the connecting of dots that machines simply cannot do.

So, close those 47 tabs. Organize your files. Run the script. And get back to actually thinking.

Ready to streamline your workflow?

Don’t let the technology intimidate you. Start small with just 3-5 papers today.

Download My Prompt TemplatesFrequently Asked Questions

Is using AI for literature review considered cheating?

No, provided it is used correctly. Using AI to generate text and passing it off as your own is academic dishonesty. However, using AI as a tool for extraction, structuring, and organizing data is akin to using statistical software or reference managers. The critical analysis and final writing remain your responsibility.

What is the biggest risk of using AI for research?

Hallucination. AI models can generate plausible-sounding but factually incorrect data. They might invent a statistic or misinterpret a methodology. This is why human verification and “spot-checking” against the original source material are non-negotiable.

Can I use this method for papers in foreign languages?

Yes! Modern AI models are exceptionally good at cross-lingual tasks. You can input a paper in German or Mandarin and ask the AI to extract findings in English. This opens up a massive breadth of literature that was previously inaccessible to monolingual researchers.